The hope that undetectable AI content exists is interesting for many writers, students, and business workers. The idea sounds simple in words: use the speed and easy access of large language models (LLMs) such as Gemini, ChatGPT, or Claude, but create output that does not get caught by modern AI detection programs.

Today, many tools on the market say they can make your AI writing completely hidden from AI detection, but actually, it is a lot more complicated than just that. Since I work in SEO, I see the importance of having strong, undetectable writing, but people should also think about the real difficulties with making AI undetectable. Instead, it helps to focus on making your content more focused on people.

Why does real AI undetectability not work?

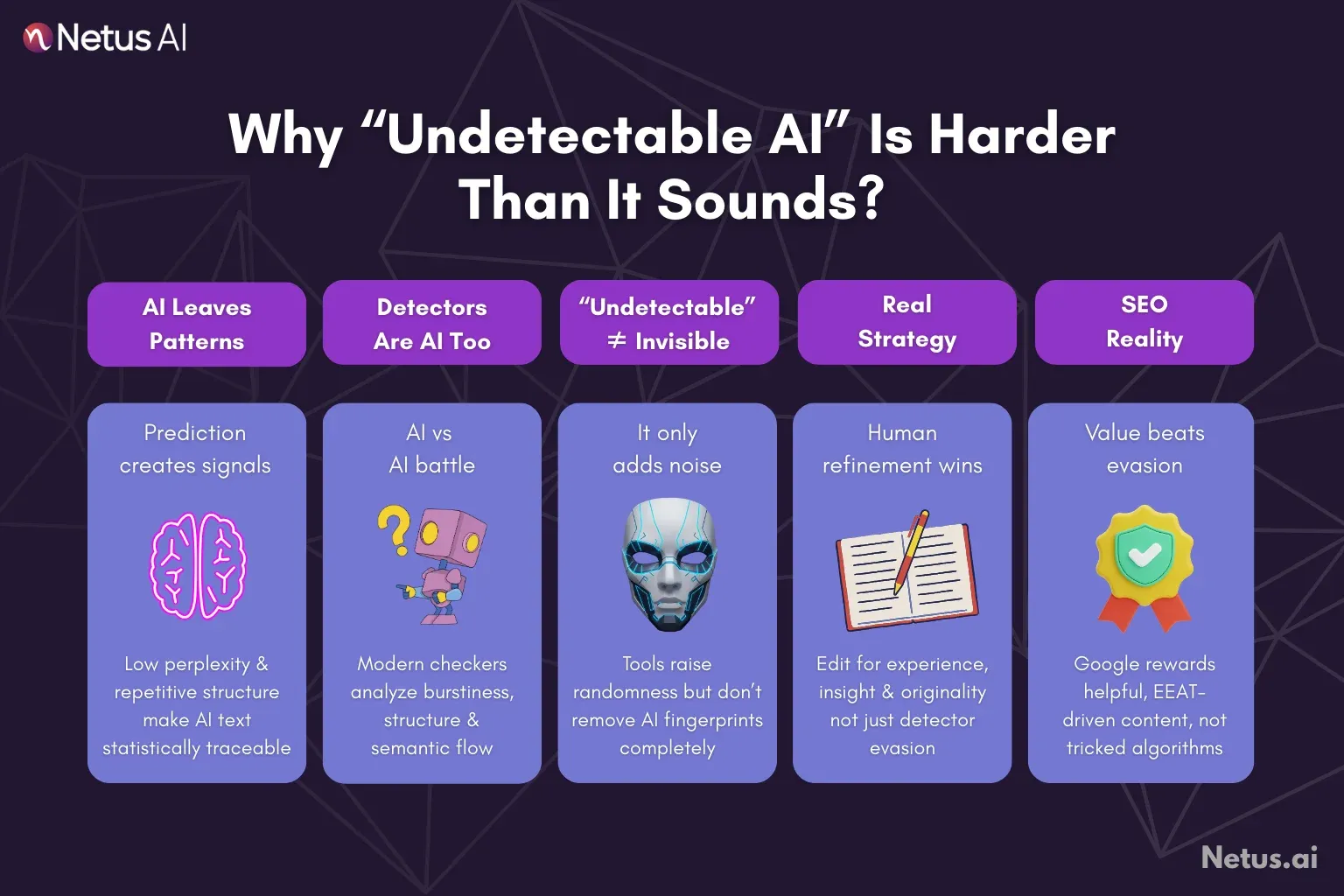

The main problem is how AI-generated language is made. Systems like LLMs are all about patterns. They choose the next likely word from very large data sets. This dependence on prediction causes certain habits in AI’s writing, the experts call these "low perplexity" and "high burstiness" numbers, which are exactly the sort of things AI detectors have been taught to find.

Understanding AI checkers

Fun fact : AI checkers are made of AI, too. They judge several things, for example:

Perplexity (amount of surprise)

Human writing is random a lot of the time and has a lot of unpredictable elements. Human writers use strange wording and can walk away from the topic. AI likes to choose the safest, simplest word to keep things smooth, so low perplexity occurs.

Burstiness (varied sentences)

People write sentences with strange differences in length or form. AI models often make sentences that are all similar, with grammar nearly perfect, making the output kind of robotic to a checker.

Words and structure

AI detectors find certain markers, such as using some transitions too much or staying too formal and academic when a relaxed style should be used.

If a tool says it will make the text undetectable, what is really going on is that it just raises the perplexity and burstiness in the content by artificial means. This shows that real invisibility is not achieved, but the main thing is confusion or making things less clear to the detector.

Mix of tools for hiding and improving content

For AI users who want to do things the right way and still lower their chances of getting caught, the best way is to use more than one tool aimed at making the writing more improved and of top quality.

AI bypasser

A very direct way is to use what they call an AI bypasser. This program takes the flat, obviously AI-produced text and changes it up. It picks other words, messes with sentence types, and adds random elements so, to the algorithms, everything appears more like a person wrote it. For people who use AI a lot but need to make sure the text goes past basic checkers, this solution is useful to make the content more proper.

Paraphrase tools

Another strong option is what is called a high-end Paraphrasing Tool. Paraphrase tools do not just swap in new words; they also make changes to the way the whole sentence functions and the feel of the writer’s voice.

When people put their AI-made text into a paraphrasing solution, a big change happens to the structure, breaking old language patterns left by AI systems. This is important for making the new content different enough, or for when someone wants to use text in several places.

AI detection techniques

It is absolutely necessary to check your content before publishing it. The AI Detector is like the final test. Use this program before sending or uploading your text so you can see if your last changes (paraphrasing, bypassing) did the job. This part is very important, as it creates a feedback process, meaning you can fix and change your content until it fits the human and originality levels you require.

SEO optimization writing program

While those tools guard content from being detected as AI, you want the main result: valuable writing that can reach the top results. SEO article generator shifts its goal from just fooling AI detectors toward giving information and value to the readers.

These tools are made to handle advanced SEO metrics, like how often a word comes up or if the content makes sense, so the text fits Google’s E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) standard. Over time, this is how top ranking is really built.

NetusAI: All-in-one system for AI content management

For people seeking undetectable content, it usually takes broken steps, using one AI Content generator, then checking with another system, and after that doing manual editing, then optimizing again.

This is not very efficient. Solutions from companies such as NetusAI have a complete set of tools which put all of these necessary actions together into one system. Rather than using separate software, with a single platform people can: make an initial draft with an SEO article generator (this helps basic SEO).

Right away, they move the draft into an AI Bypass or Paraphraser Tool, so it gives more human differentness and stronger structure. After that, results are checked straight in the built-in AI Detector. When following this simple workflow, the pressure of making “undetectable AI” content goes down and becomes more like a supervised step-by-step workflow.

Final thoughts

Mostly, undetectable AI is just marketing talk. Actually, pure undetectability is always changing, as detectors and generators compete back and forth. The best strategy in content management is to understand this, so people switch from just making AI hidden to making AI writing seem more human-like.

By using tools such as AI bypasser, Paraphrase tool, and Detector, plus continuing to use good standards from SEO article generator, everyone who creates content can produce a large number of articles that both meet search engine requirements and withstand the rigorous checks of new detection models. It is not about cheating some computer checker; it is about truly making your writing higher level.

FAQs

What is a basic thing that makes human and machine-made text different?

Really, the main things are perplexity and burstiness. Machine-made writing is usually pretty predictable (meaning it has low perplexity) and same types of sentences (low burstiness). Human writers use weird word choices, and the way it sounds keeps changing.

Are AI bypassers always going to be successful for me, like 100 percent?

No system says it is totally working, because detection software is growing all the time. Bypassers can mix up patterns, and so the chance of catching goes down a lot, but this problem does not end completely.

If I am using ChatGPT or Gemini, should I still use an SEO article generator?

Big LLMs like these focus on logical stuff, but SEO article generators are more about keyword positions and ranking. Usually, SEO ones also have tools for checking keywords, boosting meaningful phrases, and making sure they match with standards like E-E-A-T, so the final content is not only easy to read but can climb higher in ranks.

If you use an AI Paraphraser Tool, are you plagiarizing?

If all the content is coming from your own AI engine, using the paraphraser is just to make the quality higher. But if you use it for someone else’s protected text to trick detection, that is wrong and is called professional or student plagiarism.

How fast are detection programs changing?

Every time new datasets for AI-made texts are used, AI detectors are retrained and updated. This makes it important for people to keep using more than one tool and to do a final check with an AI detector for greater control over output quality.